Data Scraping vs Manual Data Collection: What Works Better?

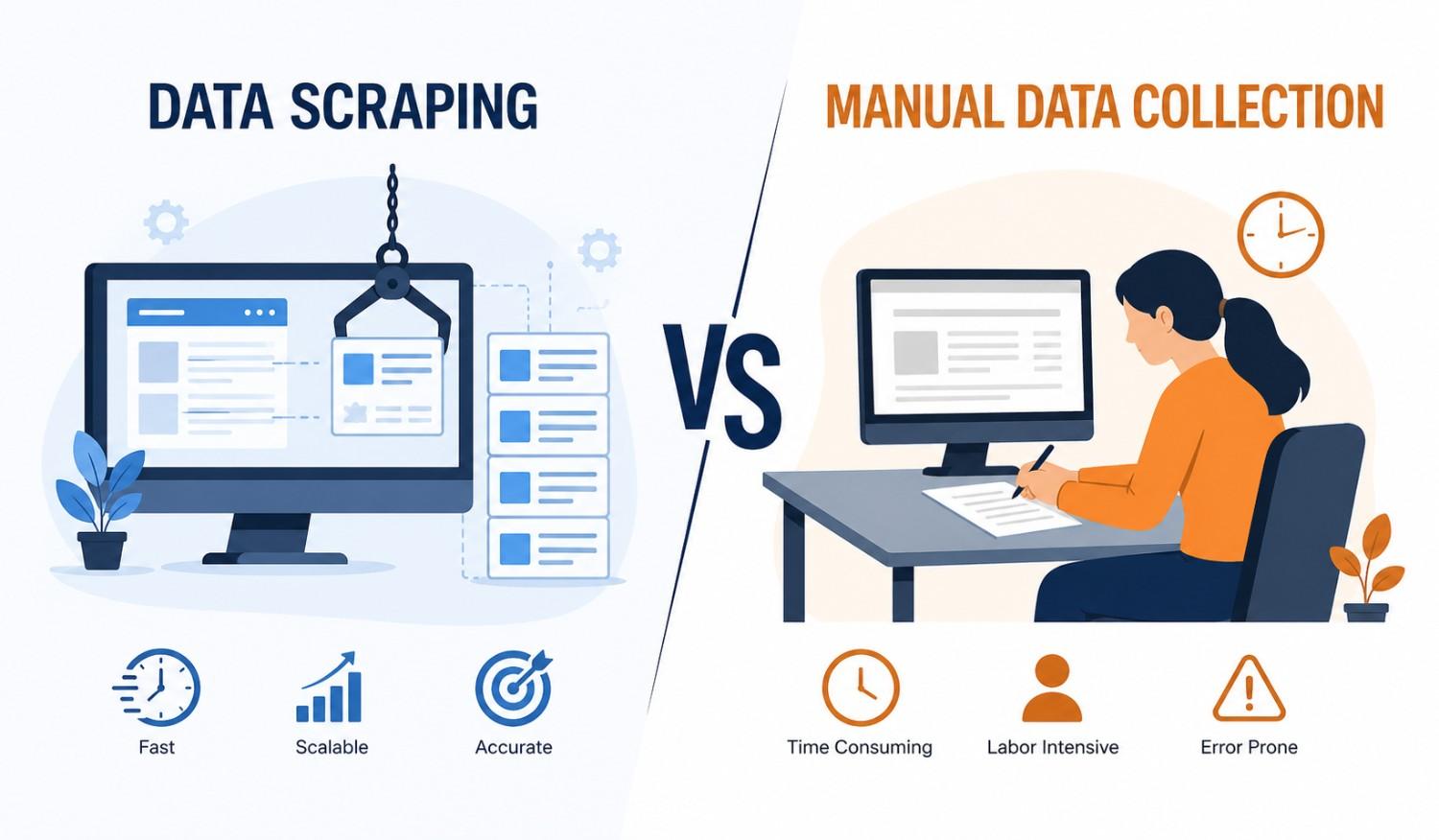

Data has quietly become the fuel behind modern business decisions and occasionally the cause of late-night spreadsheet anxiety. Teams once relied on manual effort—rows, columns, and a generous dose of patience—to gather insights. Today, automation is rewriting that playbook. The debate between data scraping and manual data collection is no longer theoretical; it’s operational. One promises speed and scale, the other control and familiarity. So, what works better? The answer, as it often does in technology, depends on context—but the differences are worth unpacking carefully.

What Is Manual Data Collection?

Manual data collection refers to the traditional approach of gathering information by hand—copying details from websites, filling out surveys, or entering records into spreadsheets. It sounds simple (and it is), but simplicity often comes with repetition. Many teams have experienced the slow rhythm of this method—click, copy, paste, repeat—until accuracy begins to slip. That said, manual collection still holds value in smaller, highly specific tasks where precision matters more than speed and automation might feel like overkill.

What Is Data Scraping?

Data scraping, often called web scraping, is the automated process of extracting data from websites using tools or scripts. Instead of relying on human effort, software performs the heavy lifting—quickly, consistently, and at scale. Think of it as a digital assistant that never tires (and never complains about repetitive tasks). Businesses often turn to a web scraping services company when large volumes of structured data are needed efficiently. The result is faster access to insights, enabling teams to act on real-time information rather than outdated snapshots.

Speed and Efficiency Comparison

Speed is where the contrast becomes almost comical. Manual data collection operates at human pace—measured, careful, and sometimes painfully slow. Data scraping, on the other hand, works in seconds, processing what might take hours or days manually. In competitive markets, this difference isn’t just noticeable—it’s decisive. Faster data means faster decisions, and faster decisions often translate into better outcomes. While manual methods may feel reliable, they rarely keep up with the demands of modern, data-driven environments.

Accuracy and Reliability

Accuracy in manual data collection depends heavily on human attention—and humans, as it turns out, are not immune to fatigue. Small errors can creep in unnoticed, especially during repetitive tasks. Automated scraping offers consistency, reducing the likelihood of such mistakes. However, it isn’t flawless either; poorly structured websites or unexpected changes can disrupt extraction processes. Both methods require oversight, but automation tends to deliver more uniform results over time, particularly when dealing with large datasets.

Scalability and Data Volume

Scaling manual data collection is a bit like trying to fill a lake with a bucket—it works, but not efficiently. As data needs grow, so does the time and workforce required. Data scraping flips this equation entirely. Once set up, it can handle massive volumes of information with minimal additional effort. Whether collecting hundreds or millions of data points, the process remains largely the same. For businesses aiming to expand their data capabilities, scalability quickly becomes a deciding factor.

Cost Considerations

At first glance, manual data collection may seem cost-effective—no specialized tools, no technical setup. However, hidden costs tend to accumulate (time, labor, and inevitable errors). Data scraping requires an upfront investment, whether in tools or expertise, but often delivers better long-term value. Automation reduces repetitive work and frees teams to focus on analysis rather than collection. Over time, the balance shifts, and what seemed expensive initially often proves more economical.

Flexibility and Use Cases

Manual data collection shines in situations requiring nuance—small datasets, qualitative insights, or one-off research tasks. It offers flexibility without technical constraints. Data scraping, meanwhile, excels in structured, repeatable processes such as market research, price monitoring, or competitor analysis. Each method has its place, and choosing the right one depends on the task at hand. In many cases, businesses find that combining both approaches creates the most effective workflow.

Compliance and Ethical Considerations

Data collection, whether manual or automated, must navigate legal and ethical boundaries. Manual methods are generally straightforward in terms of compliance, as they involve direct human interaction. Data scraping, however, requires careful attention to website policies, data privacy laws, and usage rights. Ignoring these considerations can lead to complications. Responsible data practices ensure not only legal safety but also long-term sustainability in how businesses gather and use information.

Anecdote / Real-World Observation

A familiar scenario often unfolds in growing teams—what begins as a manageable manual process can quickly become a bottleneck. One team, for instance, spent days compiling competitor pricing data, only to find it outdated by the time analysis began. Transitioning to automated scraping changed the dynamic entirely. Data became current, workflows improved, and the team finally focused on insights rather than collection. The lesson was clear (and slightly humbling): effort alone doesn’t guarantee efficiency.

Manual vs Data Scraping: Side-by-Side Comparison

Manual data collection offers control and simplicity, but struggles with speed and scale. Data scraping delivers efficiency and volume but requires technical setup and oversight. In terms of accuracy, automation tends to be more consistent, while manual methods rely on human precision. Costs vary by scope, but automation often pays off in the long run. Each approach has strengths and limitations, making the choice less about superiority and more about suitability.

Which One Works Better for Your Business?

The better approach depends on business needs, resources, and data goals. Smaller tasks with limited scope may benefit from manual collection, while large-scale operations often require automation. Many organizations lean toward data scraping for its efficiency, especially when working with dynamic data sources. Partnering with a web scraping services company can simplify implementation and ensure reliable outcomes. Ultimately, the right choice aligns with how quickly and accurately decisions need to be made.

Best Practices for Choosing the Right Approach

Start by defining the volume and frequency of data required—this often clarifies the direction. Consider budget constraints alongside long-term value, not just immediate costs. Evaluate compliance requirements to avoid potential risks. Testing both methods on a smaller scale can reveal practical insights before full implementation. When complexity increases, seeking expert guidance can make a noticeable difference. The goal isn’t just to collect data—it’s to do so efficiently and responsibly.

Conclusion

Choosing between data scraping and manual data collection isn’t about declaring a winner—it’s about understanding priorities. Speed, scale, and efficiency often push businesses toward automation, while simplicity and control keep manual methods relevant. The most effective strategies rarely rely on one approach alone. Instead, they blend the strengths of both, adapting to changing needs. In a world driven by data, the real advantage lies not in how data is collected but in how intelligently it is used.

FAQs

1. What is the main difference between data scraping and manual data collection?

Data scraping uses automated tools to extract large volumes of information quickly, while manual data collection relies on human effort to gather data step by step.

2. Is data scraping legal?

Data scraping is legal when performed in compliance with website terms of service and data privacy regulations. Responsible usage is essential.

3. When should businesses use manual data collection?

Manual methods are suitable for small-scale tasks, specialized research, or situations where automation may not be practical.

4. Can data scraping replace human effort completely?

Automation handles repetitive tasks efficiently, but human oversight remains important for analysis, validation, and decision-making.

5. How do businesses get started with data scraping?

Businesses can begin with basic tools or collaborate with experienced providers to build scalable and compliant data extraction solutions.